If you’ve worked in GRC or TPRM (Third-Party Risk Management), you know the “Questionnaire Fatigue” is real.

It usually looks like this: A vendor sends over a 200-question Excel sheet or a PDF that looks like it was scanned from a fax machine in 1999. A Security Analyst then spends the next three hours strictly doing CTRL+F through internal policy documents to answer questions like:

- Do you encrypt data at rest? Yes

- Do you have an Incident Response Plan? Yes

- Do you require MFA? Yes

It’s necessary work, but it’s not high-value work. The analyst’s brain is wasted on retrieval when it should be focused on risk analysis.

I decided to engineer my way out of this problem. I didn’t buy a six-figure tool; I built a custom engine using the tools we already had: Jira, Google Drive, and Gemini Pro.

Here is how I automated 80% of the TPRM questionnaire workflow.

The Architecture: Serverless Glue

I wanted a solution that fit naturally into the existing workflow. Work is tracked via Jira and documents (e.g. policies, etc.) are stored in Google Drive.

I built the solution using Google Apps Script. It acts as the serverless “glue” connecting the systems.

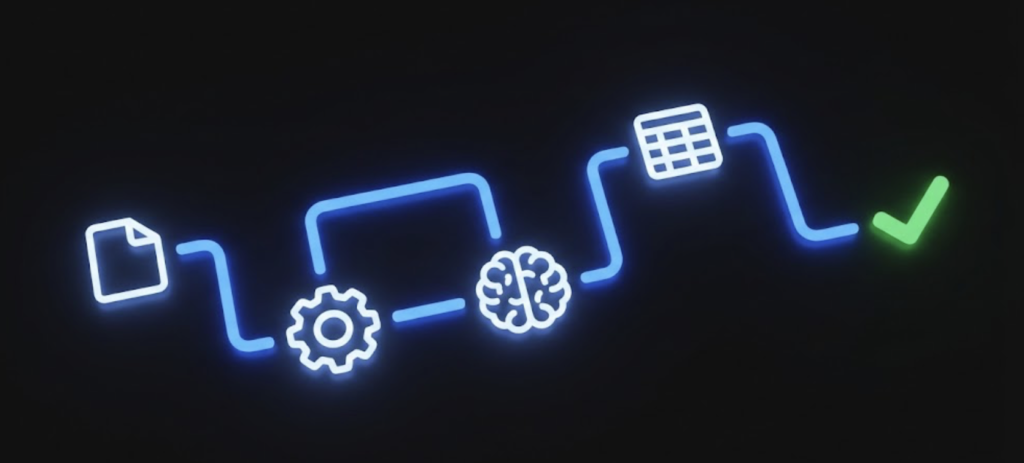

The Workflow:

- Trigger: A new TPRM ticket arrives in Jira

- Ingest: The script detects the ticket, grabs the questionnaire attachment (Excel or PDF), and moves it to a processing folder in Drive

- Context Loading (The “Smart” Part): It doesn’t just blindly feed documents to the AI, it scans the questionnaire to identify which specific documents are relevant (a “RAG-lite” approach) and loads strictly those

- AI Drafting: It sends the context + questionnaire to Gemini Pro, enabling it to answer strictly based on the policies

- Output: It generates a clean Google Sheet with the answers, confidence levels, and source citations, then links it back to the Jira ticket

Building the Brain: Technical Challenges

Writing the code (JavaScript/Google Apps Script) was the fun part and here were the three biggest engineering hurdles I had to solve to make it reliable.

1. Solving the “Context Window” Problem with RAG-Lite

You can’t just dump 50 documents into an LLM prompt and hope for the best. It’s expensive, slow, and increases hallucinations.

I wrote a helper function, getRelevantDocuments(), that essentially performs a semantic triage before the main work begins.

- It extracts the text from the vendor’s questionnaire

- It sends that text + a list of filenames of our documents to Gemini

- It asks: “Based on this questionnaire, which of these documents contain the answers?”

- It returns a filtered list (e.g., Access_Control_Policy, SOC_2_Report, Pentest_Report, etc.)

The script then opens only those files to build the context for the actual answering phase. This keeps the prompt focused and the accuracy high.

2. Taming the Input (PDFs & Excel)

Vendors send questionnaires in every format imaginable. While Google Apps Script is great, but it doesn’t natively parse complex PDF or Excel blobs easily.

I built a parser that converts these incoming files into temporary Google Docs or Sheets on the fly to extract the raw text.

// Example logic in the script

if (mimeType === MimeType.PDF) {

// Convert PDF to temporary Google Doc for OCR text extraction

const tempFile = Drive.Files.create(resource, blob, {ocr: true});

const text = DocumentApp.openById(tempFile.id).getBody().getText();

// Clean up temp file

}This ensures that whether the vendor sends a pristine spreadsheet or a PDF export, the engine can read it.

3. Enforcing JSON output for Automation

An LLM usually loves to chat. “Here are the answers you asked for…”, I didn’t want chat, I needed data I could push into a spreadsheet. So I used a rigorous prompt engineering to enforce a strict JSON array output. I instructed the model to return only a clean JSON object containing question, confidence, answer, and source.

/*

RULES FOR RESPONSE GENERATION:

1. Every answer MUST conclude with "(Refer to source document for details.)"

2. Confidence Level: 'High' vs 'Low'

3. Source Attribution: EXACT document name required.

*/I also added error handling that strips markdown code fences (json) because models love to wrap their code. This ensures the script never crashes because the AI tried to be “helpful” with formatting.

The Result: From Days to Minutes

The impact was immediate.

- Speed: A questionnaire that took 3 hours to draft now takes about 4 minutes of processing time

- Accuracy: Because I enforced “Source Attribution,” every answer points the analyst to the exact policy file, no more guessing

- Analyst: You now start with a draft questionnaire response that is 80-90% complete and only have to review the “Low Confidence” answers and hit send

The “Builder” Mindset

Building this engine wasn’t just about saving time, it was a statement about how modern security teams should operate.

As security leaders, we can’t just be policy writers or tool buyers, we must be builders, which means look at operational pain, understand the API capabilities of our toolset, and engineer our way to efficiency.

The most valuable security tool isn’t something you buy off the shelf; it’s the ability to see a bottleneck and code your way out of it. That’s how you scale security without just throwing more bodies at the problem.